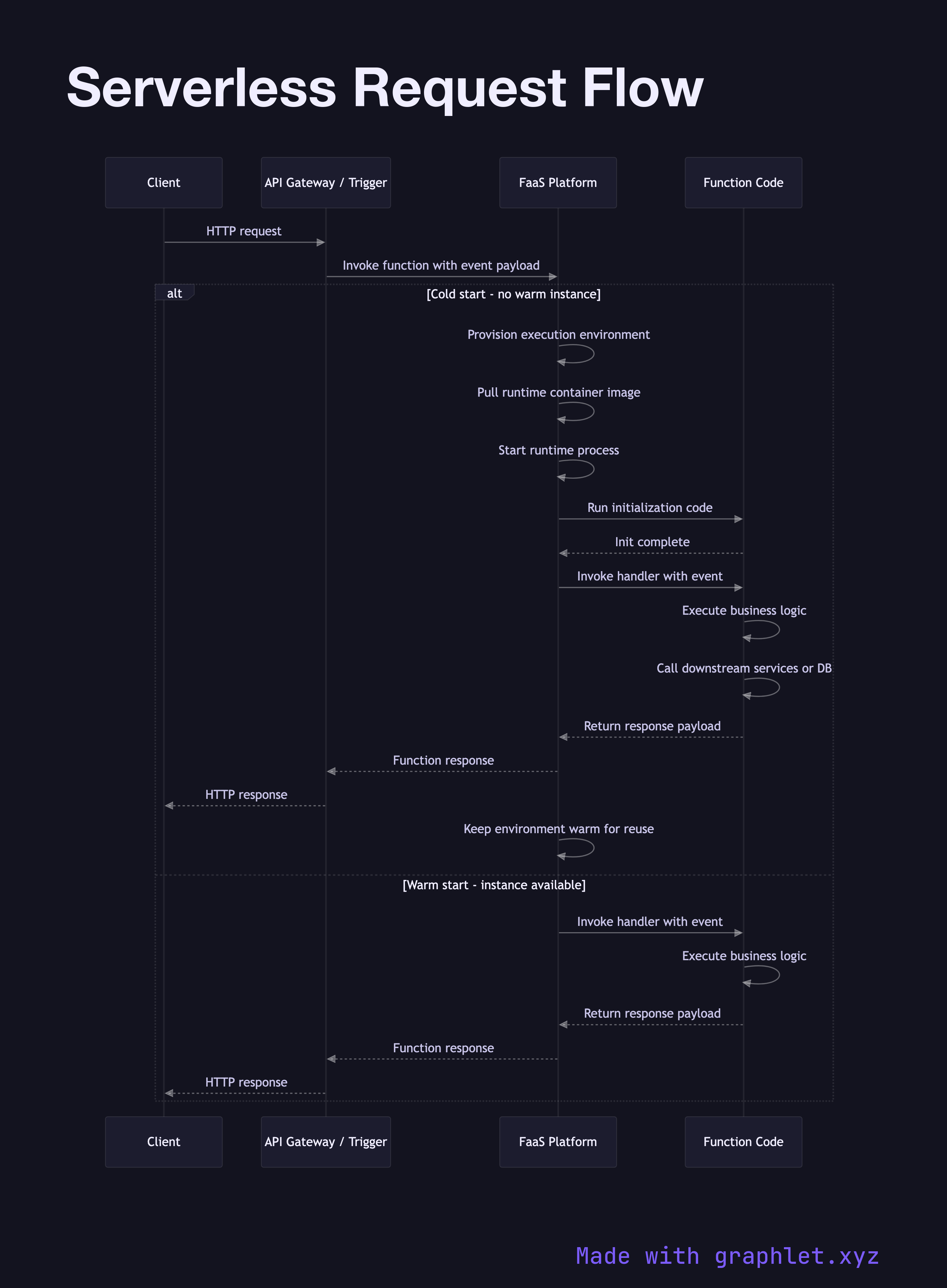

Serverless Request Flow

A serverless request flow describes how an HTTP request triggers the lifecycle of a Function-as-a-Service (FaaS) — from cold-start initialization through execution and response — on platforms like AWS Lambda, Google Cloud Functions, or Azure Functions.

A serverless request flow describes how an HTTP request triggers the lifecycle of a Function-as-a-Service (FaaS) — from cold-start initialization through execution and response — on platforms like AWS Lambda, Google Cloud Functions, or Azure Functions.

What the diagram shows

This sequence diagram involves four participants: Client, API Gateway / Trigger, FaaS Platform, and Function Code.

Two paths are shown:

Cold start path (no warm instance available): 1. The client sends a request that hits the API gateway (e.g., AWS API Gateway or an HTTP trigger). 2. The platform has no warm instance ready — it must provision a new execution environment. 3. The runtime is initialized: container image pulled, runtime process started, initialization code (init phase) executed. 4. The handler function is invoked with the event payload. 5. The function executes, calls downstream services or databases, and returns a response. 6. The execution environment is kept warm briefly to serve subsequent requests.

Warm start path (instance already warm): 1. A subsequent request arrives while the environment is still alive. 2. The handler is invoked directly — skipping all initialization steps. 3. Response time is dramatically lower.

Why this matters

Cold starts are the primary operational challenge of serverless architectures. A Java or .NET function with heavy dependency loading can add hundreds of milliseconds to the first request after idle periods. Strategies to mitigate cold starts include provisioned concurrency (pre-warming instances), minimizing dependency footprint, and using runtimes with faster startup times (Go, Rust, Node.js).

For edge deployments with even lower latency requirements, see Edge Function Execution. For long-running async workloads that don't fit the serverless request-response model, see Background Job Processing.