Write Through Cache

Write-through caching is a strategy where every write operation updates both the cache and the database synchronously before the write is acknowledged to the client, ensuring the cache never holds data that differs from the database.

Write-through caching is a strategy where every write operation updates both the cache and the database synchronously before the write is acknowledged to the client, ensuring the cache never holds data that differs from the database.

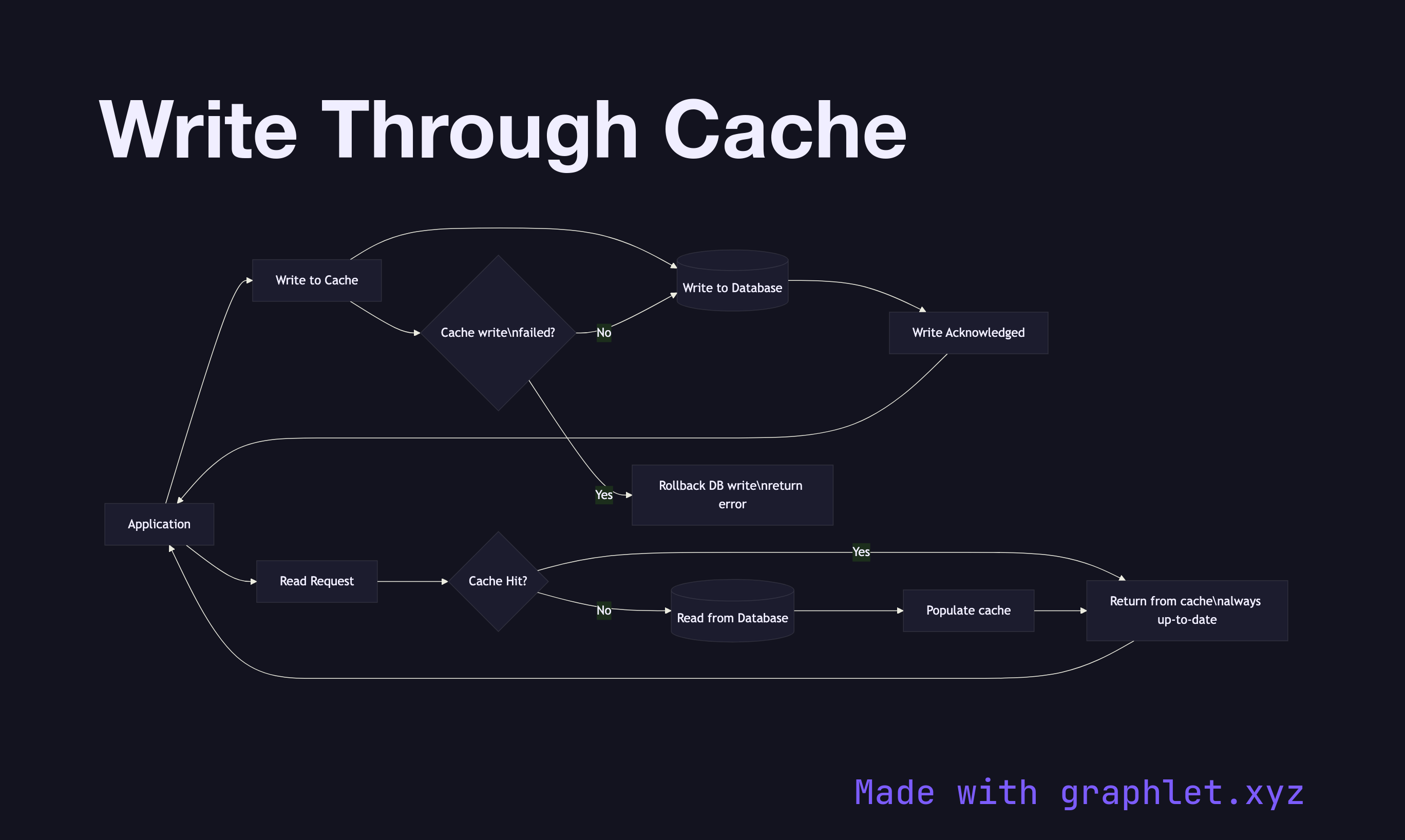

This diagram shows the write path. When the application writes data, the cache layer intercepts the write, persists the new value to the cache, then immediately forwards the write to the database. Only after both the cache write and the database write complete successfully is the response returned to the application. If either write fails, the entire operation is treated as failed.

The key benefit of write-through is cache coherence: the cache always reflects what is in the database. Read operations after a write are guaranteed to hit the cache and return accurate data without any inconsistency window. This makes write-through well-suited for data that is read frequently immediately after being written.

The trade-off is write latency: every write incurs the cost of two storage operations instead of one. The write must complete on both the cache (fast) and the database (slower). For write-heavy workloads or high-volume event streams, this additional latency per write can become a bottleneck.

Write-through also causes cache pollution: data is written to the cache regardless of whether it will ever be read. A record written once and never read again occupies cache memory until its TTL expires. This can crowd out frequently read data and reduce overall cache hit rates.

Compare with Cache Aside Pattern, where the cache is populated lazily on reads, and Write Back Cache, where the database write is deferred, making writes faster at the cost of potential data loss if the cache fails before flushing.