Cache Aside Pattern

The cache-aside pattern (also called lazy loading) is a caching strategy where the application code is responsible for loading data into the cache on demand — the cache never proactively populates itself.

The cache-aside pattern (also called lazy loading) is a caching strategy where the application code is responsible for loading data into the cache on demand — the cache never proactively populates itself.

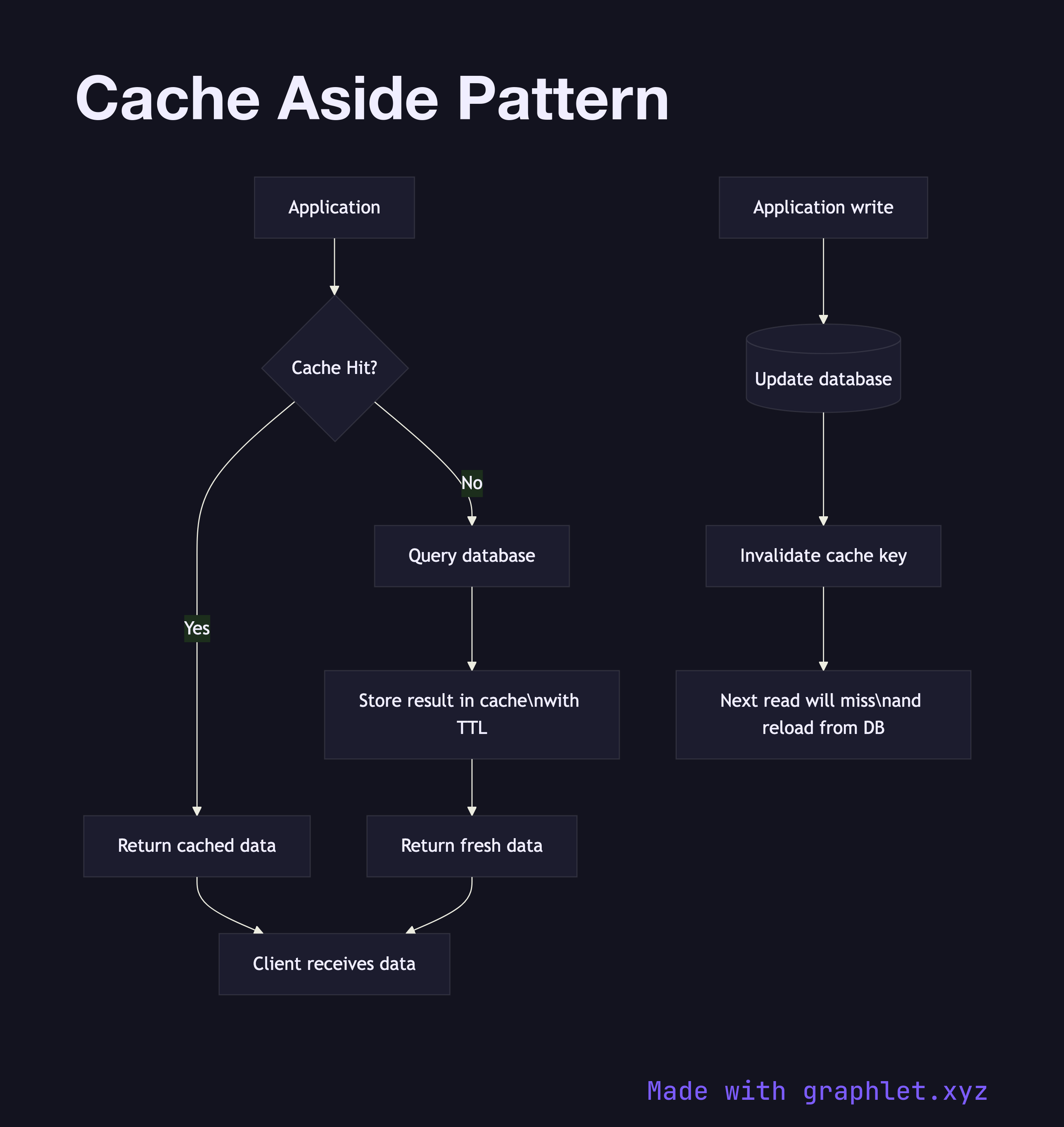

This diagram shows the two code paths: cache hit and cache miss. On every read, the application first checks the cache (typically Redis or Memcached) for the requested key. If the key exists and has not expired (cache hit), the cached value is returned directly to the client without touching the database. This is the fast path — a cache hit takes microseconds versus milliseconds for a database query.

On a cache miss, the application queries the database for the data, stores the result in the cache with a configured TTL, and then returns the data to the client. Subsequent reads for the same key will hit the cache until the TTL expires or the key is explicitly invalidated.

Cache-aside is the most commonly used caching pattern because it gives the application full control over what data enters the cache, when it expires, and how invalidation is handled. The application only caches data that has actually been requested, avoiding pre-population of rarely accessed data.

The main risk is thundering herd: if a popular cached key expires and many requests arrive simultaneously, all of them will miss the cache, all query the database, and all attempt to write the same result back to the cache. This can be mitigated with probabilistic early expiration, mutex locks on cache writes, or staggered TTLs.

Cache-aside also leaves a window of inconsistency: between a database write and a cache invalidation (or TTL expiry), readers may get stale data from the cache. Compare this to Write Through Cache, which keeps cache and database in sync on every write, and Write Back Cache, which prioritizes write performance at the cost of durability guarantees.