Write Back Cache

Write-back caching (also called write-behind) is a strategy where writes go to the cache first and are acknowledged immediately, with the cache asynchronously flushing the updated data to the database in the background.

Write-back caching (also called write-behind) is a strategy where writes go to the cache first and are acknowledged immediately, with the cache asynchronously flushing the updated data to the database in the background.

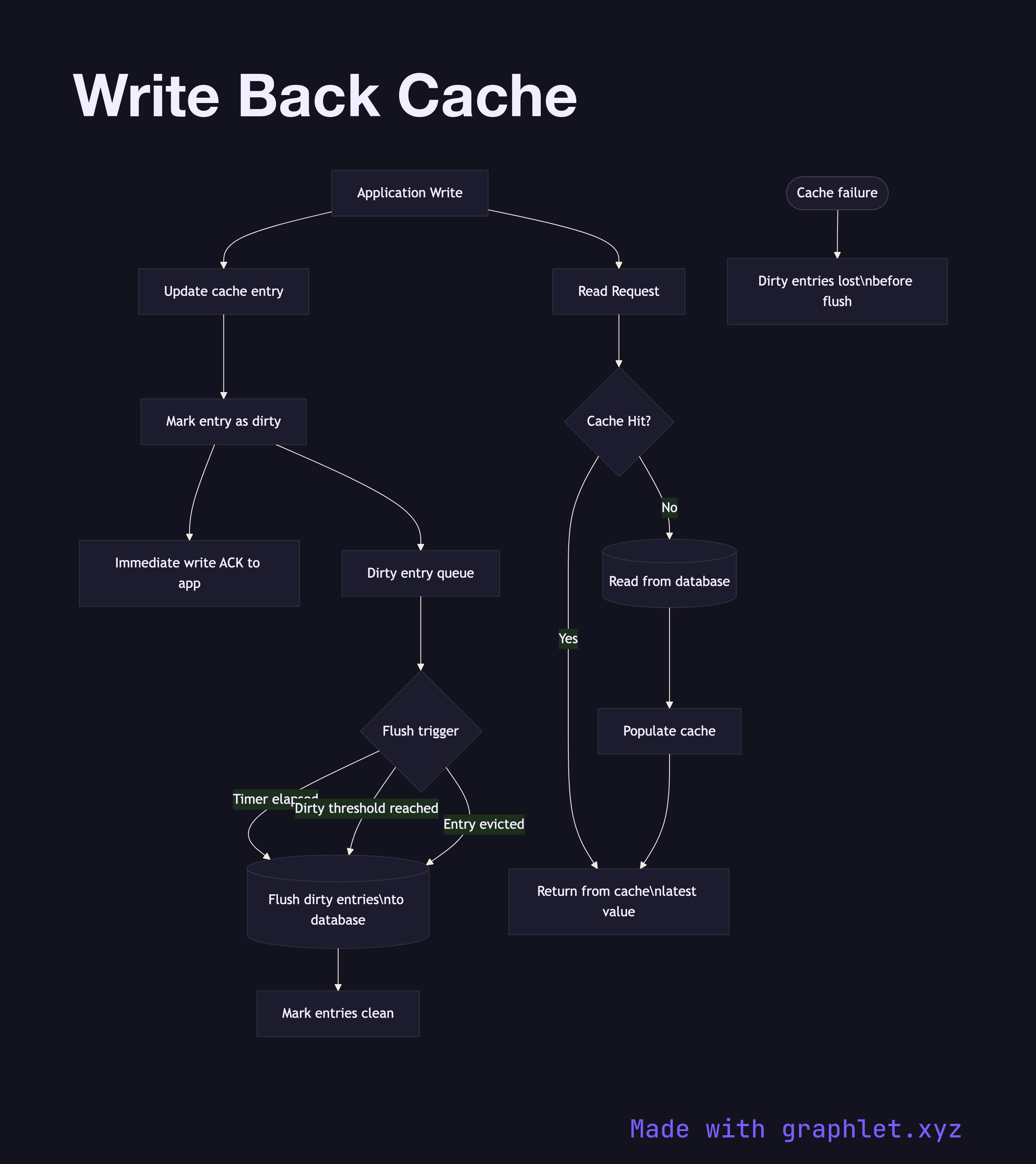

This diagram shows the write path. The application writes to the cache, the cache marks the entry as dirty (modified but not yet persisted), and immediately acknowledges the write. The application can continue without waiting for a database round-trip. A background flush process — triggered by a timer, dirty entry threshold, or cache eviction — later writes the dirty entries to the database.

The primary advantage of write-back is write throughput: multiple writes to the same key can be coalesced into a single database write. If a record is updated 100 times in one second, the cache absorbs all 100 updates and flushes once. This dramatically reduces write amplification to the database, making write-back ideal for high-frequency update scenarios such as counters, analytics aggregations, or game state.

The critical risk is data loss: if the cache crashes before a dirty entry is flushed to the database, that data is permanently lost. For this reason write-back caches are inappropriate for financial transactions or any data where durability is critical. Mitigation strategies include persisting the dirty log to disk (Redis AOF mode), replicating the cache itself, or using short flush intervals.

Write-back differs fundamentally from Write Through Cache on durability: write-through ensures every write is immediately in the database, while write-back optimizes for speed at the cost of a durability window. The Cache Aside Pattern avoids this trade-off entirely by keeping the cache and database logically separate, but requires the application to manage consistency manually.