Event Streaming Architecture

Event streaming architecture is a system design pattern in which services communicate by publishing and subscribing to a continuous, replayable stream of immutable event records rather than making direct synchronous calls.

Event streaming architecture is a system design pattern in which services communicate by publishing and subscribing to a continuous, replayable stream of immutable event records rather than making direct synchronous calls.

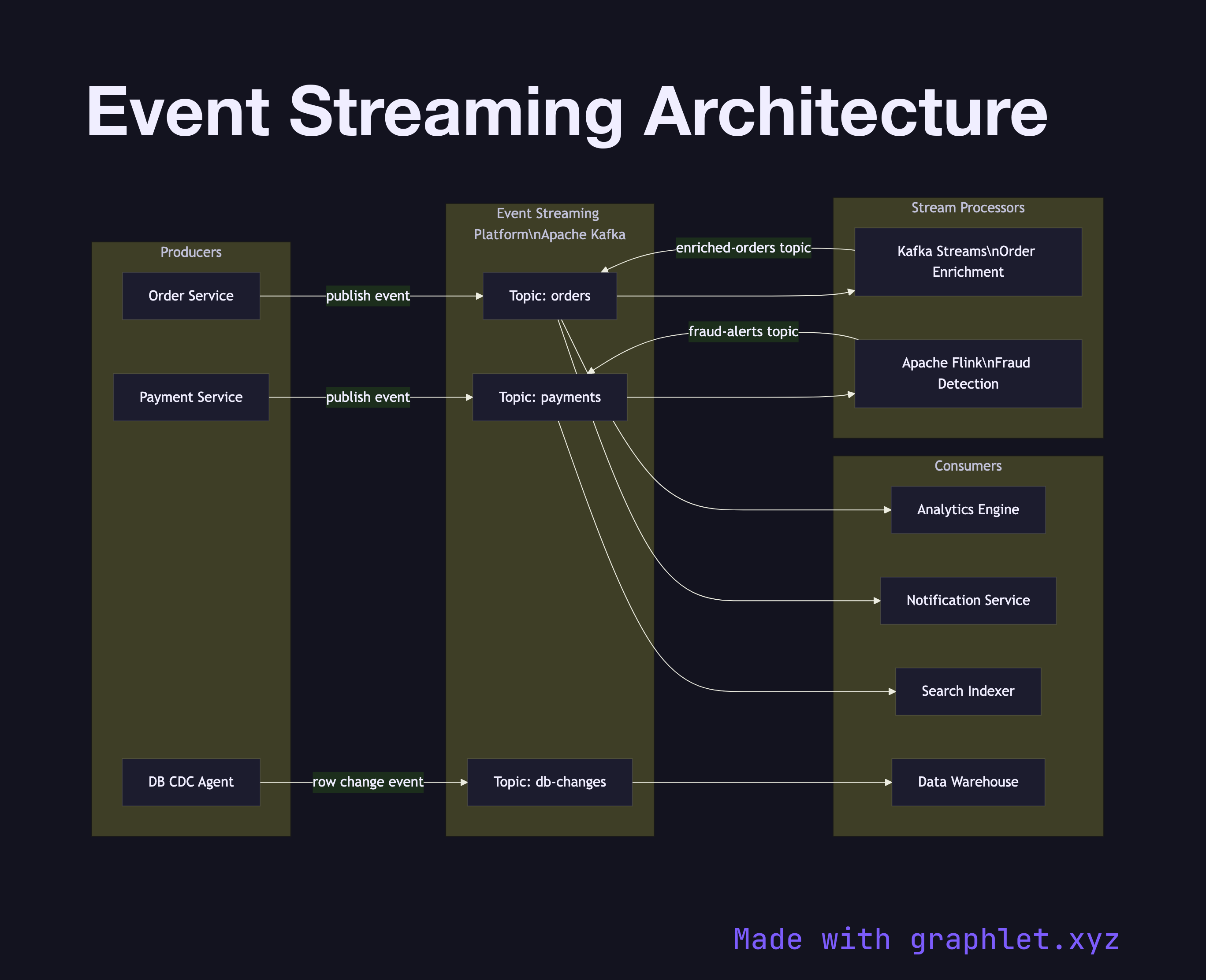

At its core, event streaming replaces point-to-point integration with a durable event log at the center. Producers — which can be microservices, IoT devices, database change-data-capture (CDC) agents, or user-facing APIs — emit events describing things that have happened: an order was placed, a sensor reading was recorded, a payment was processed. These events are written to a streaming platform like Apache Kafka and retained for a configurable period, independent of whether any consumer has read them.

Downstream consumers read from the event log at their own pace. A stream processor like Kafka Streams, Apache Flink, or Spark Structured Streaming can perform stateful aggregations, joins, and enrichments in real time. Multiple independent consumer groups — analytics engines, notification services, search indexers — can all replay the same stream without interfering with each other, a property that enables Fan Out Messaging.

This architecture provides several guarantees unavailable in synchronous RPC systems. Because the log is immutable and replayable, consumers can rebuild their state from scratch by replaying historical events — the foundation of Event Sourcing Pattern. New services can be added without modifying producers. Temporal decoupling means producers and consumers can be deployed, scaled, and fail independently.

The trade-off is eventual consistency: a consumer's view of the world lags behind the event log by the time it takes to process outstanding messages. For workflows requiring coordinated multi-service state changes, the Saga Pattern combined with event streaming is the standard solution.