Load Balancer Routing

A load balancer is a network component that distributes incoming requests across multiple backend servers, preventing any single server from becoming a bottleneck while providing fault tolerance and horizontal scalability.

A load balancer is a network component that distributes incoming requests across multiple backend servers, preventing any single server from becoming a bottleneck while providing fault tolerance and horizontal scalability.

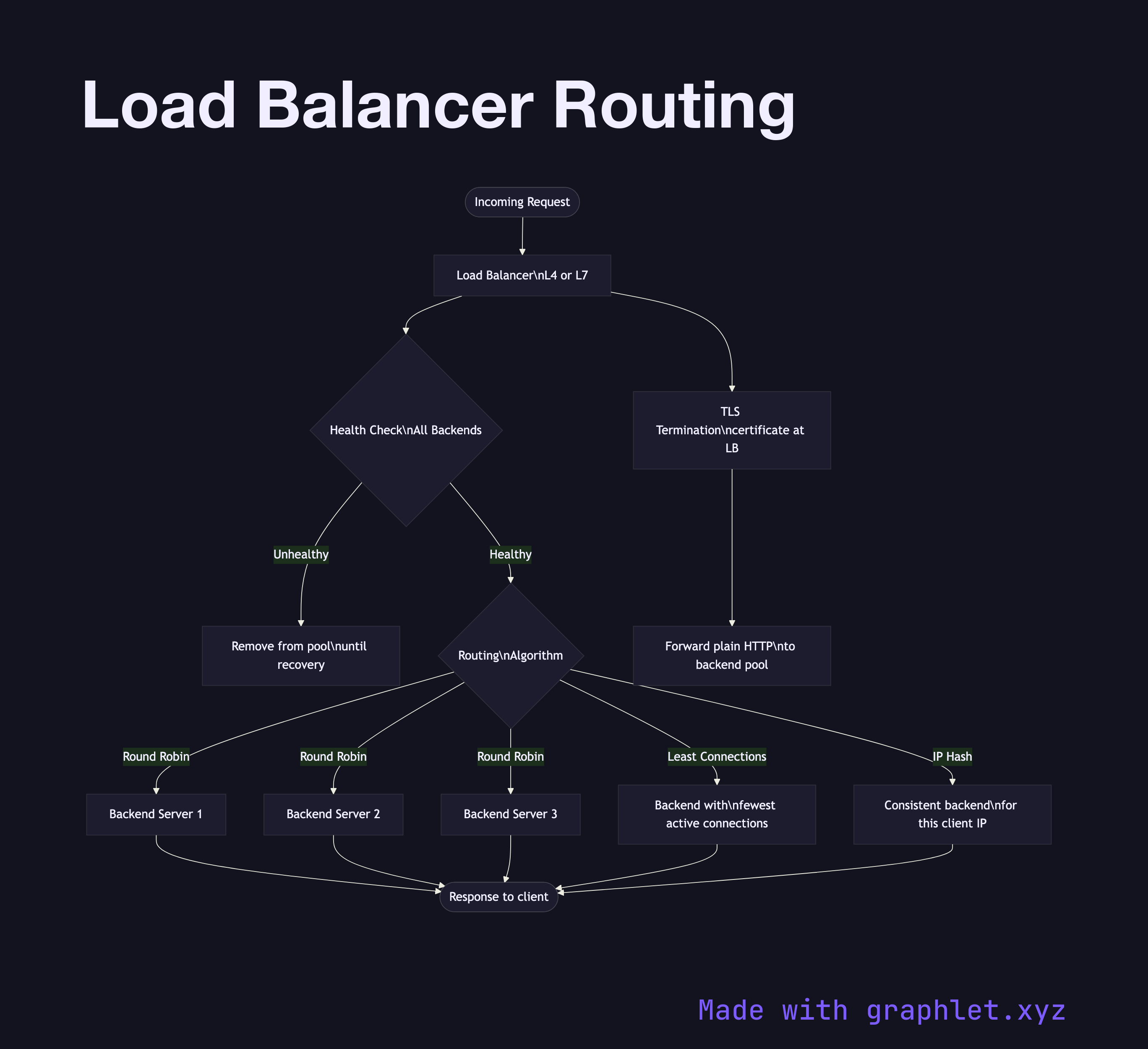

Load balancers operate at different network layers. Layer 4 (Transport) load balancers route based on IP and TCP/UDP port — they see source/destination addresses but not HTTP content. Layer 7 (Application) load balancers parse HTTP headers and can route based on URL path, hostname, cookies, and request content. AWS ALB, Nginx, HAProxy, and Envoy operate at Layer 7.

Routing Algorithms:

- Round Robin: Requests are distributed sequentially across backends. Simple, effective when all backends are equivalent. - Weighted Round Robin: Backends with higher capacity receive proportionally more requests. - Least Connections: New requests go to the backend with the fewest active connections — better for variable-duration requests like file uploads. - IP Hash: Client IP is hashed to consistently route a given client to the same backend (session affinity / sticky sessions). - Random with Two Choices (P2C): Randomly sample two backends and pick the one with fewer connections — approaches optimal performance at scale.

Health Checks: Load balancers continuously probe backends (HTTP GET /health, TCP connect, or custom checks) at configurable intervals. Backends that fail health checks are removed from rotation until they recover. This is the primary mechanism for zero-downtime rolling deployments.

SSL/TLS Termination: L7 load balancers typically terminate TLS, forwarding plain HTTP to backends on an internal network. This centralizes certificate management and reduces backend CPU overhead.

See Reverse Proxy Request Flow for the closely related pattern where a single reverse proxy sits in front of backends.