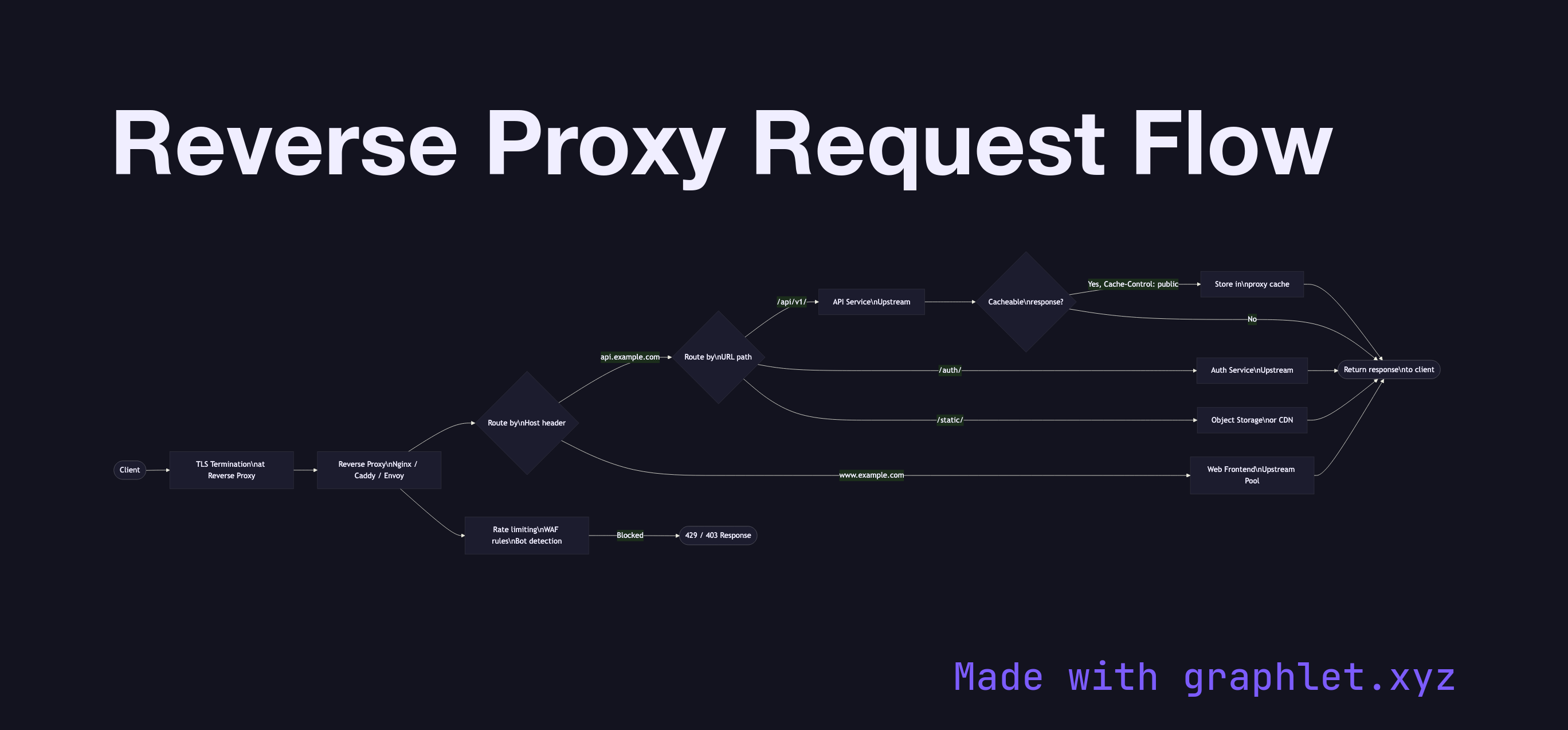

Reverse Proxy Request Flow

A reverse proxy is a server-side intermediary that accepts incoming client requests and forwards them to one or more backend servers, providing TLS termination, request routing, load balancing, caching, and security filtering from a single entry point.

A reverse proxy is a server-side intermediary that accepts incoming client requests and forwards them to one or more backend servers, providing TLS termination, request routing, load balancing, caching, and security filtering from a single entry point.

Unlike a forward proxy that serves clients, a reverse proxy serves the backend infrastructure. From the client's perspective, it's talking directly to the destination server — the reverse proxy is invisible except for the IP address it exposes.

TLS Termination: The reverse proxy handles TLS on behalf of all backends. Clients establish TLS with the proxy; backends receive plain HTTP over a trusted internal network (or re-encrypted with a separate TLS session). This centralizes certificate management (one wildcard or multi-SAN cert), offloads crypto from application servers, and enables the proxy to inspect HTTP headers for routing.

Virtual Host Routing: A single reverse proxy can serve multiple applications by routing based on the Host header. A request for api.example.com goes to the API cluster; www.example.com goes to the web frontend. This is standard configuration in Nginx, Caddy, and cloud load balancers.

Path-Based Routing: Within a single hostname, requests can be routed by URL path prefix: /api/ routes to the API service, /static/ routes to an object store or CDN, /auth/ routes to an authentication service. This is the foundation of API gateway patterns (see API Gateway Request Flow).

Response Caching: Reverse proxies can cache backend responses (for GET requests with appropriate Cache-Control headers), serving subsequent identical requests from cache without hitting the backend — similar to a CDN at the edge of your own infrastructure.

Security Layer: Rate limiting, WAF (Web Application Firewall) rules, bot detection, and DDoS mitigation are commonly implemented at the reverse proxy layer.