Long Polling Request Flow

Long polling is a technique for near-real-time server push over standard HTTP, where the client sends a request that the server deliberately holds open until new data is available — or a timeout expires — before responding, at which point the client immediately re-connects.

Long polling is a technique for near-real-time server push over standard HTTP, where the client sends a request that the server deliberately holds open until new data is available — or a timeout expires — before responding, at which point the client immediately re-connects.

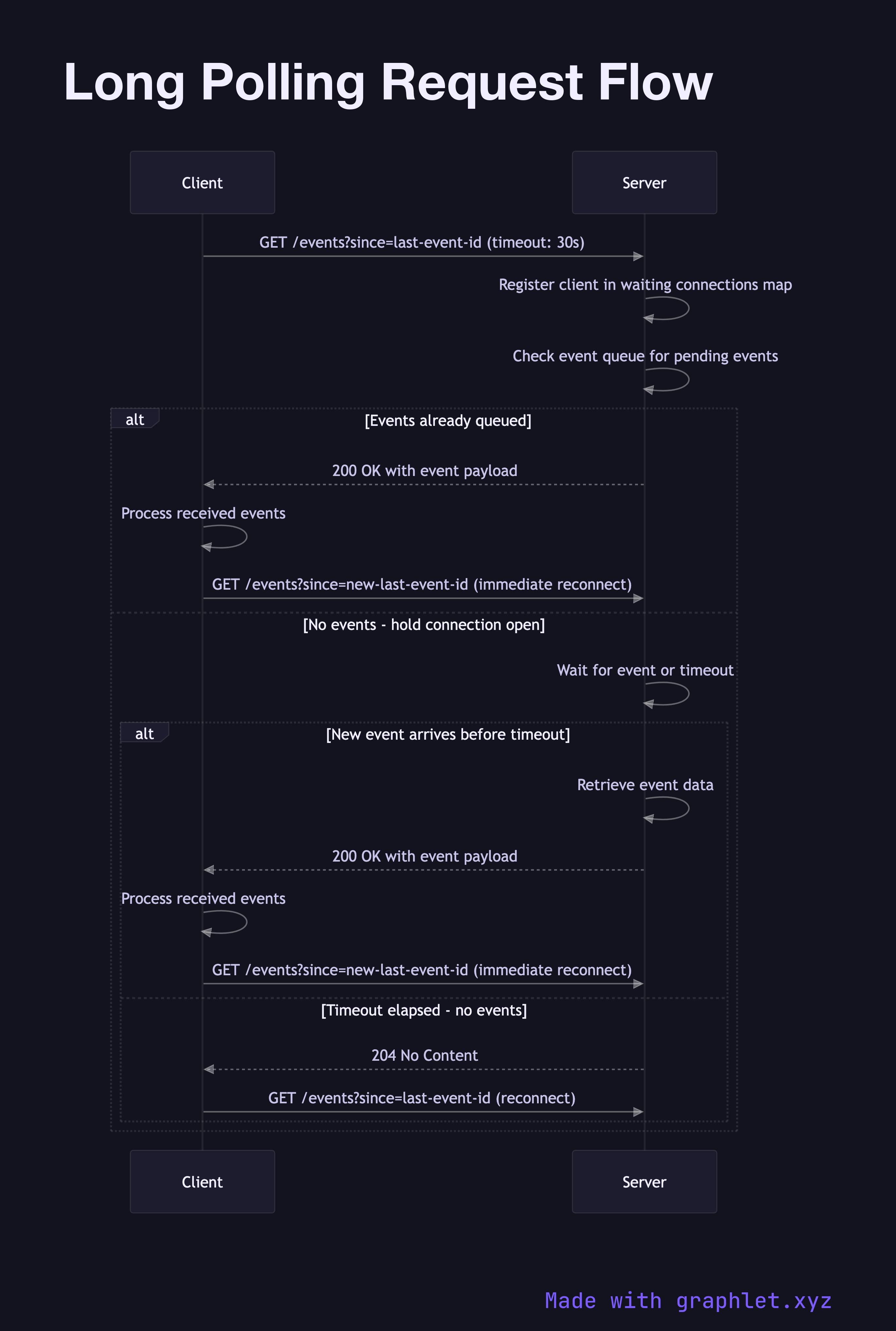

What the diagram shows

This sequence diagram illustrates the long polling cycle between a Client and a Server, including how it differs from both short polling and WebSockets:

1. Client connects: the client sends a standard HTTP GET request with a request timeout header (e.g., 30 seconds) and optionally a Last-Event-ID to indicate what was last received. 2. Server holds connection: instead of responding immediately, the server suspends the response and waits — either by registering a callback on an event source or by polling an internal queue. 3. Event arrives: when a new event occurs (e.g., a new chat message, a job status change), the server writes the response and sends it to the waiting client. 4. Timeout path: if no event arrives before the timeout, the server sends an empty 200 OK or 204 No Content. The client re-connects immediately. 5. Client reconnects: after receiving any response (event or timeout), the client issues a new long-poll request. From the outside, this looks like a continuous connection.

Why this matters

Long polling predates WebSockets and Server-Sent Events but remains widely used because it works over standard HTTP/1.1 with no special infrastructure — it traverses proxies, firewalls, and load balancers that might block persistent TCP connections. The trade-off is higher per-message overhead (a full HTTP request/response cycle per event batch) and challenges with connection management under high concurrency.

For full-duplex real-time communication, compare with WebSocket Connection Flow. For server-initiated push without client reconnect overhead, see the networking SSE Event Stream diagram. The Serverless Request Flow illustrates why serverless functions are a poor fit for long polling — held connections consume the function's execution time budget.