CDN Edge Caching

CDN edge caching is the mechanism by which a Content Delivery Network stores copies of assets — static files, API responses, or rendered pages — at geographically distributed Points of Presence (PoPs), serving cached content to users from the nearest location rather than every request hitting the origin server.

CDN edge caching is the mechanism by which a Content Delivery Network stores copies of assets — static files, API responses, or rendered pages — at geographically distributed Points of Presence (PoPs), serving cached content to users from the nearest location rather than every request hitting the origin server.

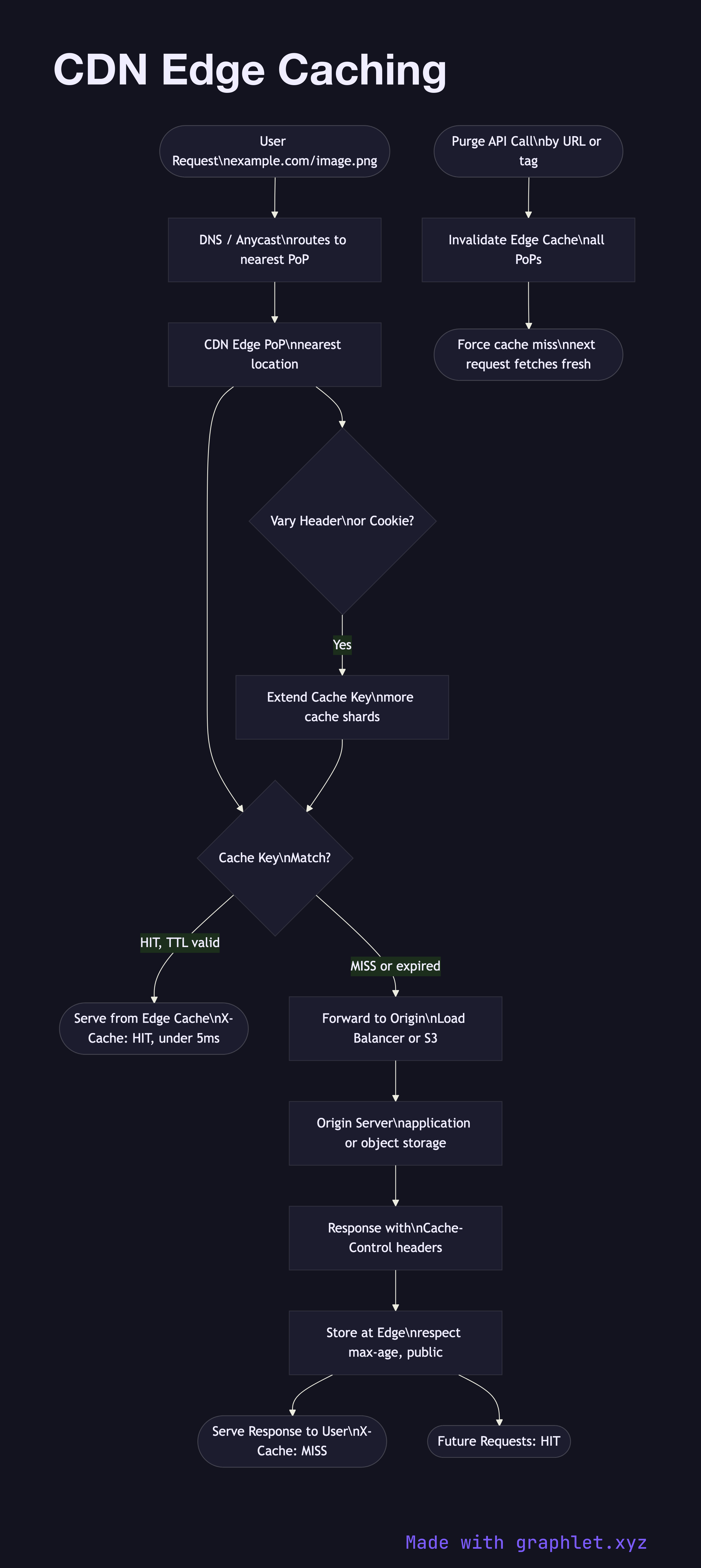

When a user requests a resource, DNS resolution (or anycast routing) directs the request to the nearest PoP. The edge node checks its cache store for the requested URL and cache key:

Cache hit: The object is present and has not exceeded its TTL. The edge returns the cached response immediately with minimal latency (often under 5ms). A CF-Cache-Status: HIT or X-Cache: HIT header confirms cache service.

Cache miss: The object is absent or expired. The edge forwards a request to origin (the application server, S3 bucket, or load balancer). The origin returns the response with Cache-Control headers (e.g., max-age=3600, public) that instruct the CDN on cacheability and TTL. The edge stores the response and returns it to the user, future requests hitting the cache.

Cache keys determine uniqueness. By default the full URL is the cache key. Vary headers, query parameters, cookies, and geographic location can extend the key, leading to more cache shards. Aggressive Vary headers (e.g., on User-Agent) effectively disable caching.

Cache invalidation lets operators purge stale content immediately — by URL, tag, or prefix — without waiting for TTL expiry. This is essential after deployments that change static asset content.

See Edge Computing Architecture for compute at the edge beyond caching, and Object Storage Lifecycle for managing cached assets in origin storage.