IoT Edge Processing

IoT edge processing is the execution of computation — filtering, inference, aggregation, and decision-making — directly on or near the device that produces sensor data, reducing bandwidth consumption and latency compared to routing all data to a central cloud platform first.

IoT edge processing is the execution of computation — filtering, inference, aggregation, and decision-making — directly on or near the device that produces sensor data, reducing bandwidth consumption and latency compared to routing all data to a central cloud platform first.

The motivation for edge processing is practical: a factory floor with 500 vibration sensors generating samples at 1 kHz each would saturate most WAN links if every sample were forwarded to the cloud. Running a lightweight anomaly-detection model on a local edge node means that only events and exceptions — not raw waveforms — traverse the expensive uplink.

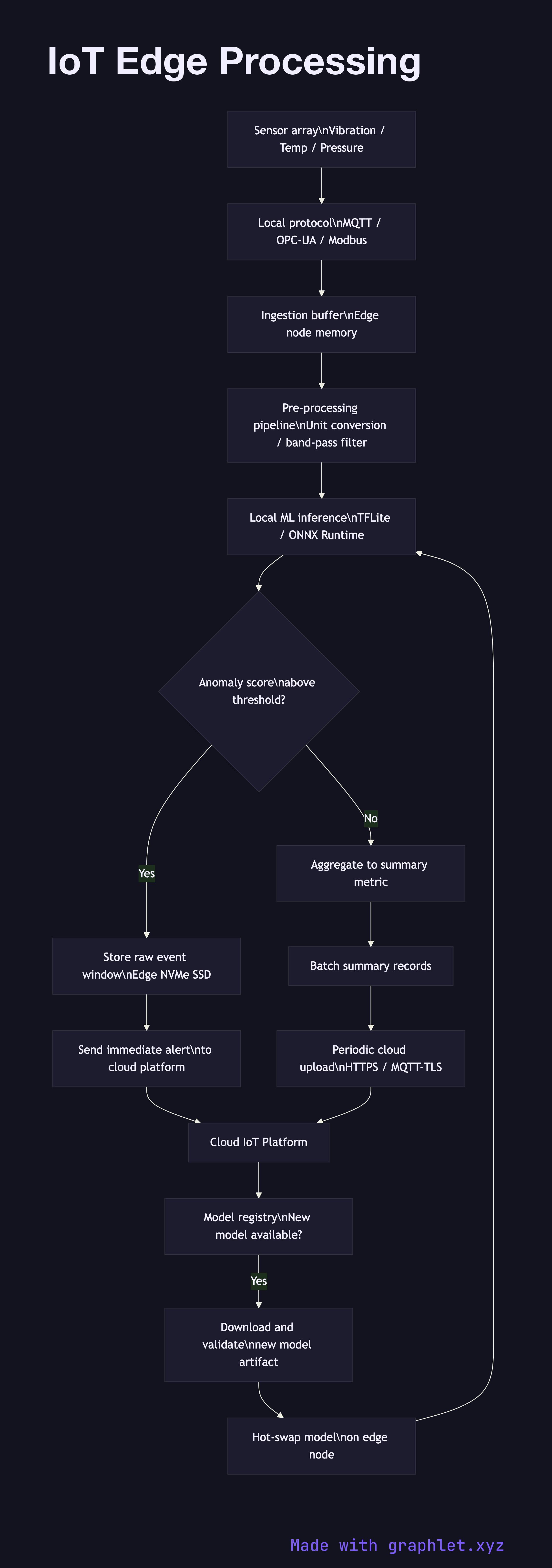

The edge node receives raw sensor streams over a local protocol such as MQTT, OPC-UA, or Modbus. An ingestion buffer absorbs burst traffic. From there, a pre-processing pipeline applies unit conversion, timestamp normalisation, and a configurable band-pass filter to remove DC offset and out-of-band noise. The cleaned stream feeds a local ML inference engine — often a TensorFlow Lite or ONNX Runtime model deployed as a container on the edge node. The model outputs an anomaly score or a classification label.

A decision router examines the inference result. For normal operating conditions, the result is aggregated into a summary metric and batched for periodic upload. For anomalous conditions, the full raw window around the event is stored locally in a short-term edge store (NVMe SSD), and an immediate alert is sent to the cloud. This design ensures the forensic raw data is available without requiring continuous raw-data upload.

The edge node also caches its model registry: when the cloud publishes a new model version, the edge node downloads, validates, and hot-swaps it without interrupting the running inference pipeline. For the broader device-to-cloud context, see IoT Device Data Flow. For gateway-level protocol translation, see IoT Gateway Architecture. For the cloud-side complement to edge processing, see cloud/edge-computing-architecture.