Scalable Web Architecture

A scalable web architecture is designed to handle increasing user load by distributing traffic, caching aggressively, and separating stateless compute from stateful storage so each tier can scale independently.

A scalable web architecture is designed to handle increasing user load by distributing traffic, caching aggressively, and separating stateless compute from stateful storage so each tier can scale independently.

What the diagram shows

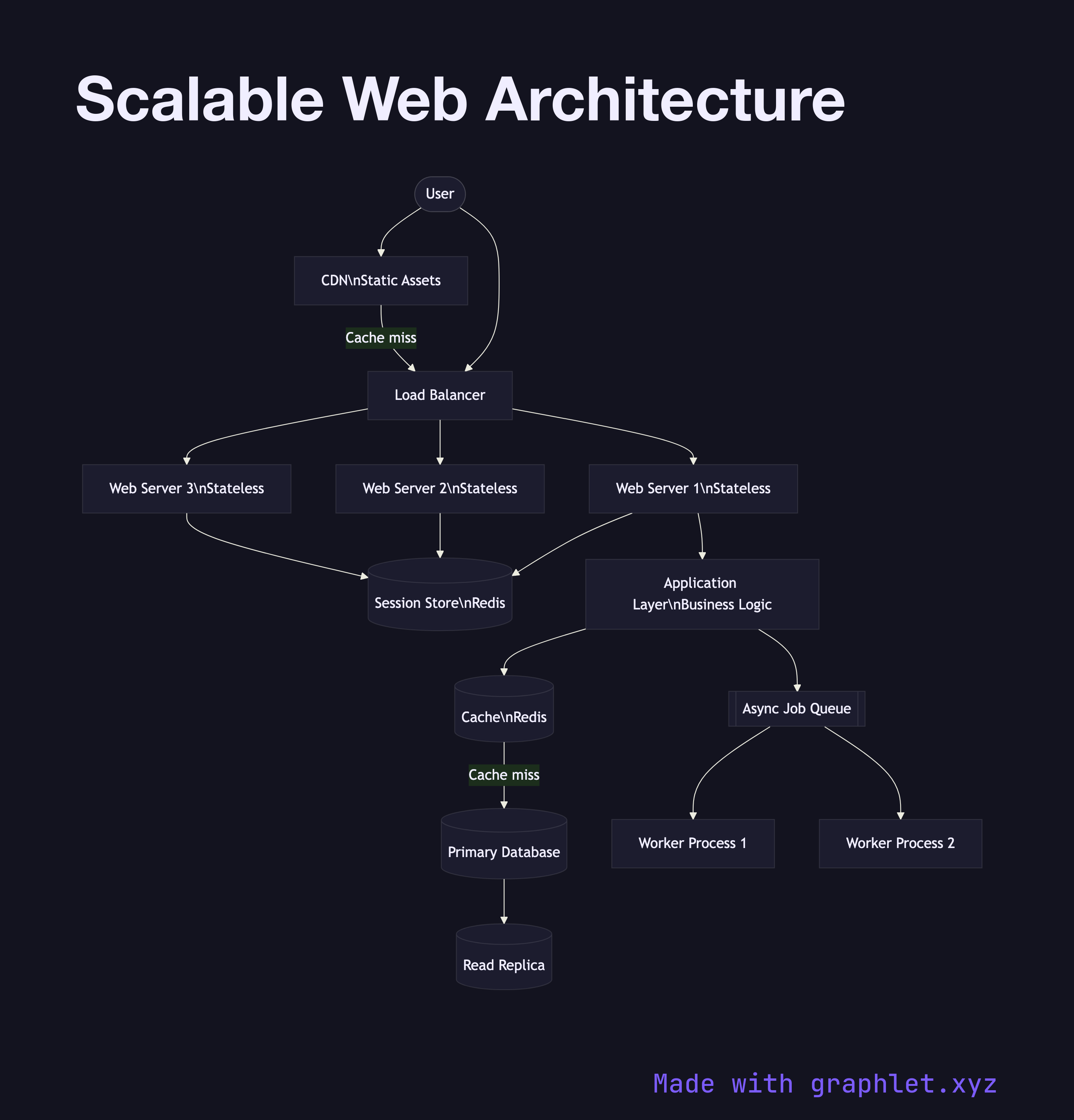

Requests arrive at a CDN (Content Delivery Network), which serves static assets — images, CSS, JavaScript — from edge nodes close to the user without touching the origin. Dynamic requests pass through to a Load Balancer, which distributes them across a pool of Stateless Web Servers. Because session state is stored externally in a Session Store (Redis), any web server can handle any request, making horizontal scaling straightforward.

The web servers call an Application Layer (business logic), which reads from a Cache before querying the Primary Database. The primary replicates to one or more Read Replicas to spread read load. Heavy background workloads — report generation, email delivery, data exports — are pushed onto an Async Job Queue consumed by Worker Processes that run independently of the request path.

Why this matters

The key principle is isolating stateful components (databases, caches) from stateless ones (web servers, workers). Stateless components can be cloned freely behind a load balancer; stateful components require replication or partitioning strategies. Adding a CDN alone can eliminate 80–90% of origin traffic for content-heavy applications. For high availability across failures, see High Availability System. For multi-region extension of this pattern, see Multi Region Deployment.