AI Tool Calling Flow

AI tool calling (also called function calling) is the mechanism by which a language model identifies the need for external information or action during generation, emits a structured tool invocation request, and resumes generation after receiving the tool's result.

AI tool calling (also called function calling) is the mechanism by which a language model identifies the need for external information or action during generation, emits a structured tool invocation request, and resumes generation after receiving the tool's result.

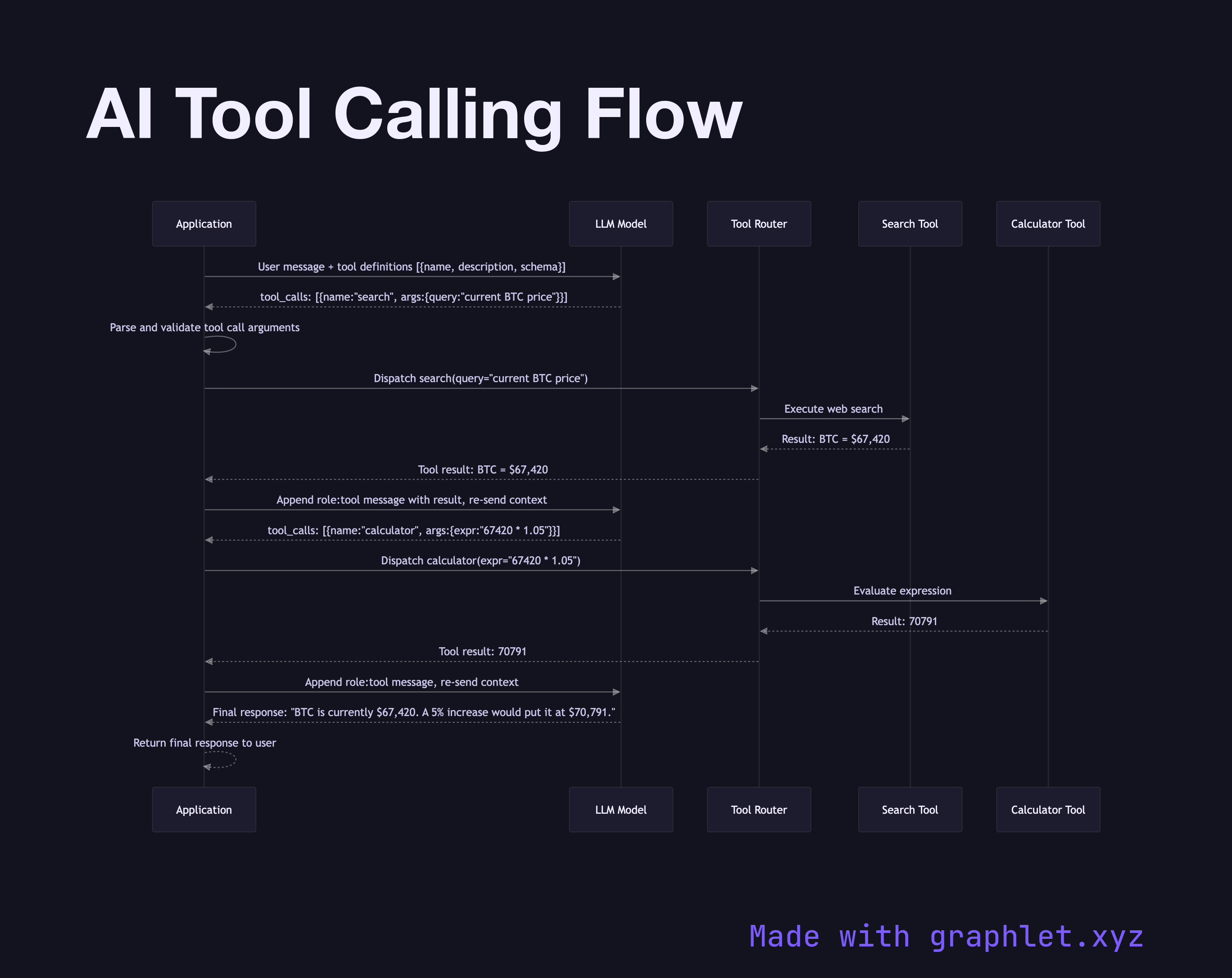

What the diagram shows

This sequence diagram traces a single tool-calling cycle between an application, the LLM, and two external tools:

1. User request: the application sends a user message along with a list of available tool definitions (name, description, JSON schema for parameters) in the API request. 2. LLM reasoning: the model determines that it needs external data to answer accurately and emits a tool_calls object instead of a text response, specifying the tool name and arguments. 3. Application parses tool call: the application layer extracts the tool name and validates the arguments against the expected schema. 4. Tool routing: the application routes the call to the correct tool implementation — a web search engine, calculator, database query, or external API. 5. Tool execution: the tool executes and returns a structured result. 6. Result injection: the application adds the tool result to the conversation as a role: tool message and re-sends the full context to the LLM. 7. LLM final generation: with the tool result in context, the model generates a final natural-language response that incorporates the new information. 8. Response returned: the final response is delivered to the user.

Why this matters

Tool calling transforms an LLM from a static knowledge base into a dynamic reasoning engine that can fetch live data, execute code, and interact with external systems. See AI Agent Workflow for how multi-step tool calling fits into a broader agentic loop.