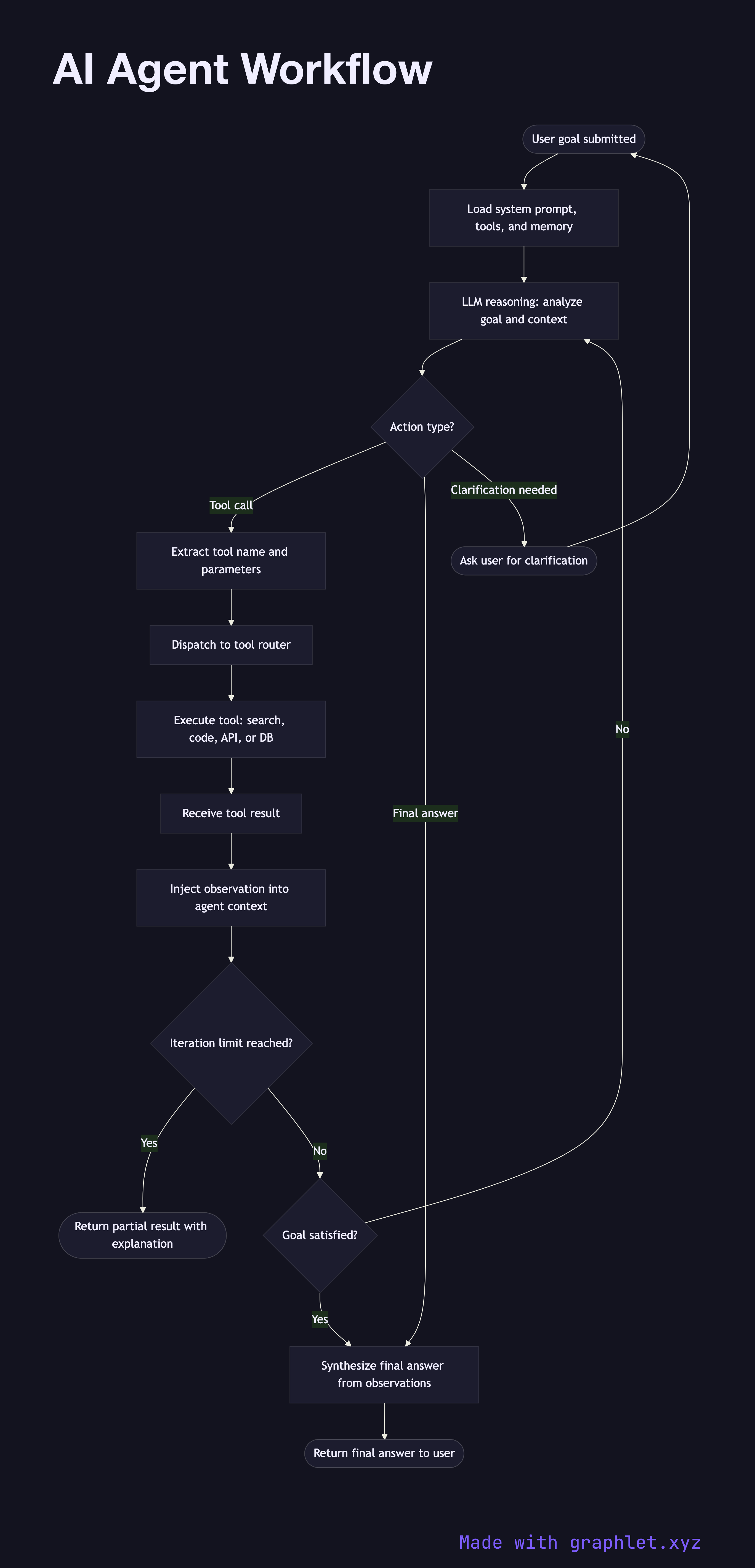

AI Agent Workflow

An AI agent workflow is the autonomous plan-act-observe loop that allows a language model to break down a complex goal into sub-tasks, execute actions via tool calls, observe the results, and iteratively refine its approach until the goal is satisfied.

An AI agent workflow is the autonomous plan-act-observe loop that allows a language model to break down a complex goal into sub-tasks, execute actions via tool calls, observe the results, and iteratively refine its approach until the goal is satisfied.

What the diagram shows

This flowchart maps the ReAct-style (Reasoning + Acting) loop used by most production AI agents:

1. User goal: a high-level objective is submitted to the agent (e.g., "Research the top 5 competitors and write a summary report"). 2. Agent initializes: the agent loads its system prompt, available tool definitions, and any relevant memory from previous sessions. 3. LLM reasoning step: the LLM receives the current context — goal, prior observations, tool results — and produces a structured thought about what to do next. 4. Action decision: the model decides whether to call a tool, generate a final answer, or request clarification. 5. Tool dispatch: if a tool call is required, the tool name and parameters are extracted and dispatched to the tool router (see AI Tool Calling Flow). 6. Tool execution: the tool (web search, code interpreter, database query, API call) executes and returns a result. 7. Observation injection: the tool result is injected back into the agent's context as an observation, extending the working memory. 8. Goal satisfied?: the agent evaluates whether the accumulated observations and tool outputs are sufficient to satisfy the original goal. 9. Final answer: if the goal is met, the agent synthesizes the observations into a coherent final response. 10. Max iterations check: a hard iteration limit prevents infinite loops. If exceeded, the agent returns a partial result with an explanation.

Why this matters

The ReAct loop is what separates stateless chatbots from goal-directed agents. Understanding the loop structure helps engineers set appropriate iteration limits, design effective tool interfaces, and debug runaway agent behavior.