Kubernetes Service Routing

A Kubernetes Service is a stable virtual IP and DNS name that provides a consistent endpoint for a set of pods, abstracting away the ephemeral nature of pod IP addresses and enabling both internal cluster communication and external traffic ingress.

A Kubernetes Service is a stable virtual IP and DNS name that provides a consistent endpoint for a set of pods, abstracting away the ephemeral nature of pod IP addresses and enabling both internal cluster communication and external traffic ingress.

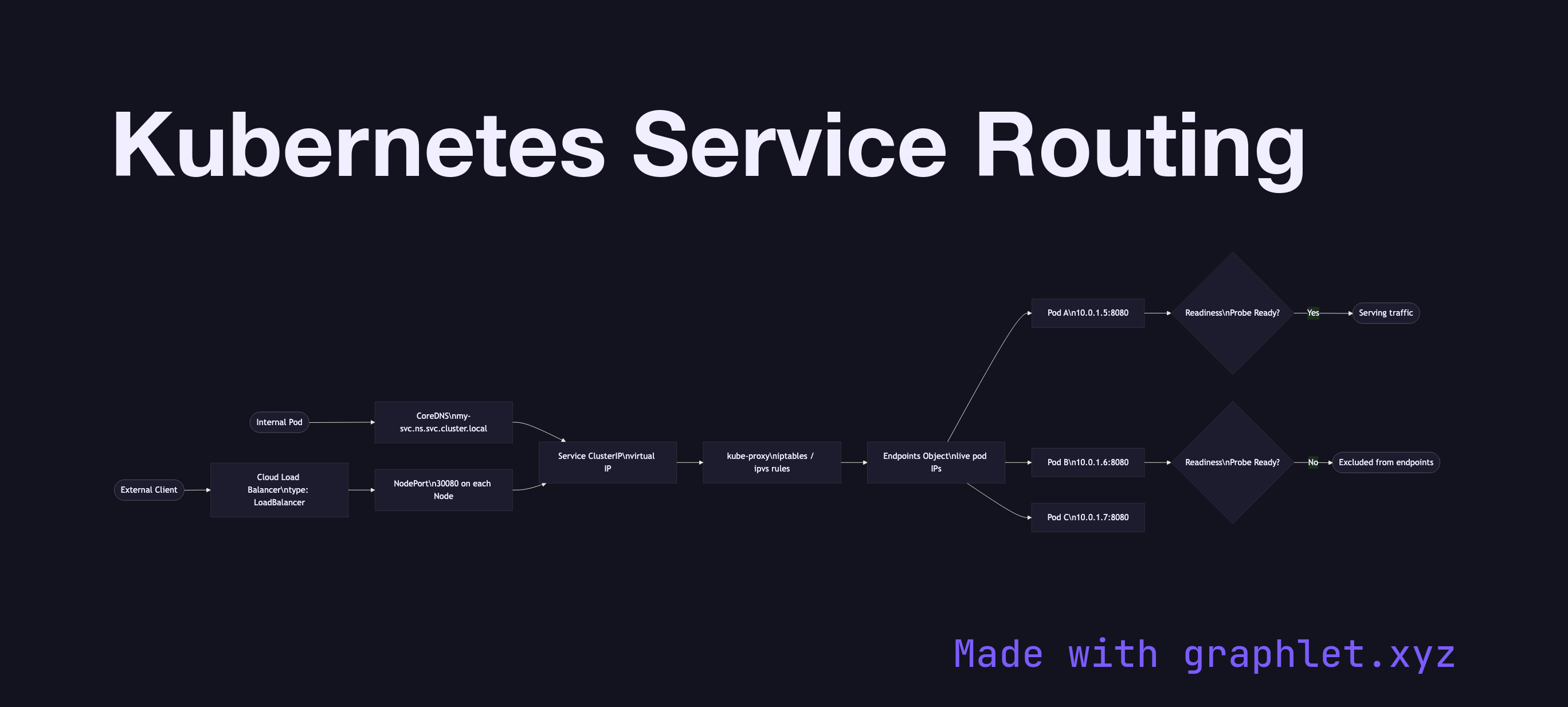

Pods are mortal — they are created and destroyed as deployments scale or roll out updates, and each new pod receives a new IP address. A Service solves this by maintaining a stable ClusterIP and updating the Endpoints object whenever pods matching its label selector change. kube-proxy on each node programs iptables or ipvs rules to forward traffic destined for the ClusterIP to one of the ready pod IPs.

Kubernetes offers four Service types:

ClusterIP (default): Accessible only within the cluster. Other pods resolve the service via its DNS name (my-service.my-namespace.svc.cluster.local). Traffic is load-balanced round-robin across matching pods.

NodePort: Exposes the service on a static port (30000–32767) on every node's external IP. Useful for development or when no cloud load balancer is available.

LoadBalancer: Provisions a cloud provider load balancer (AWS ELB, GCP CLB, Azure LB) that forwards external traffic to the NodePort and then to pods. This is the standard production exposure mechanism for non-HTTP services.

ExternalName: Maps the service to a CNAME (e.g., an RDS hostname), allowing in-cluster pods to reference external services by Kubernetes DNS names.

Traffic routing to pods is gated by readiness probes — only pods reporting Ready receive traffic. See Kubernetes Ingress Routing for HTTP-level routing and Kubernetes Pod Lifecycle for pod state transitions.