Distributed Hash Table

A distributed hash table (DHT) is a decentralized data structure that partitions ownership of a key-value store across a set of participating nodes, enabling any node to efficiently route a lookup to whichever node is responsible for a given key — without any central directory.

A distributed hash table (DHT) is a decentralized data structure that partitions ownership of a key-value store across a set of participating nodes, enabling any node to efficiently route a lookup to whichever node is responsible for a given key — without any central directory.

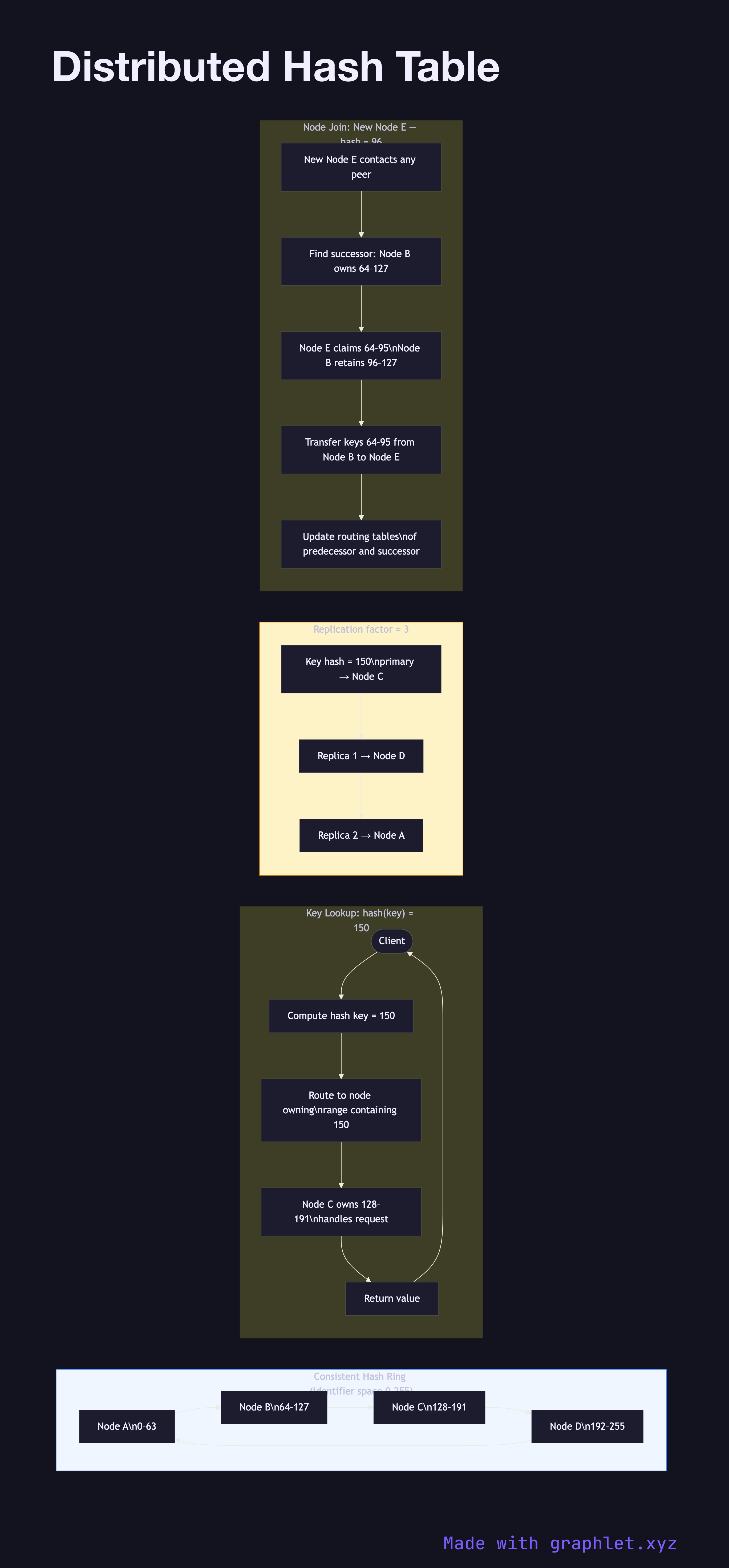

DHTs assign each node and each key a position in a common identifier space (typically 160-bit SHA-1 or 256-bit SHA-256 hashes). Each node is responsible for keys whose hash falls within a contiguous range of that space. When a node joins or leaves, only a fraction of keys — proportional to 1/N — must be remapped.

Key Routing: To look up a key, a node computes hash(key) and forwards the request toward the node responsible for that hash region. Different DHT algorithms achieve this with different routing tables: Chord Protocol uses a finger table enabling O(log N) hops; Kademlia uses XOR distance; Pastry uses prefix routing.

Replication: Keys are typically replicated to the next r successor nodes (commonly r = 3), so the data survives up to r − 1 simultaneous node failures. Read and write quorums follow the same W + R > N logic used by systems like Dynamo and Cassandra.

Node Join: A new node contacts any existing member, computes its own ID, and asks to find its successor. It then transfers the key range it now owns from that successor and updates neighboring nodes' routing tables. Membership changes propagate via Gossip Protocol.

DHTs underpin BitTorrent (Kademlia), IPFS (Kademlia), Cassandra (consistent hashing ring), and Amazon DynamoDB. The combination of consistent hashing, replication, and decentralized routing makes DHTs a foundational building block for any large-scale key-value store.