AI Content Generation Pipeline

An AI content generation pipeline is the end-to-end workflow that takes a structured content brief and produces a published, quality-checked artifact — passing through prompt construction, LLM generation, moderation, optional human review, and publishing.

An AI content generation pipeline is the end-to-end workflow that takes a structured content brief and produces a published, quality-checked artifact — passing through prompt construction, LLM generation, moderation, optional human review, and publishing.

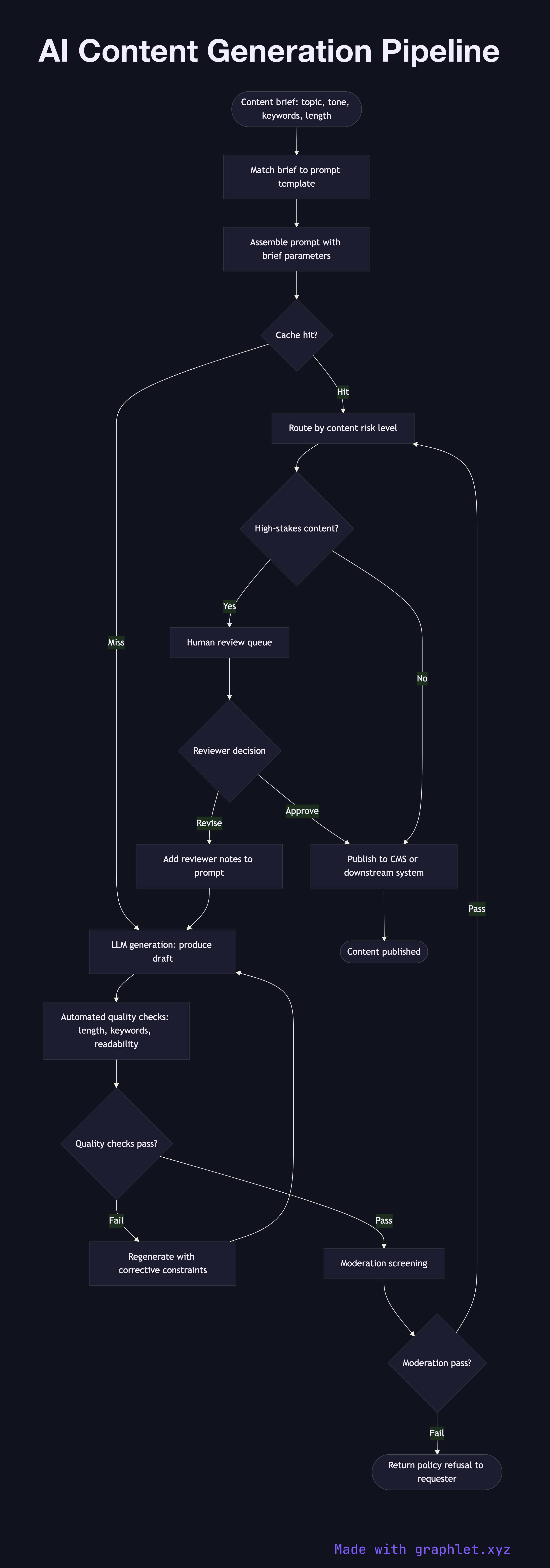

What the diagram shows

This flowchart traces a production content generation workflow suitable for marketing copy, documentation, or product descriptions:

1. Content brief: a structured input specifying topic, tone, target audience, length constraints, brand guidelines, and any required keywords. 2. Template selection: the brief is matched to a prompt template designed for the content type (blog post, product description, email subject line). 3. Prompt assembly: the template is populated with brief parameters and optionally enriched with retrieved examples or brand voice documents (see Prompt Processing Pipeline). 4. Cache lookup: identical briefs hit a prompt cache to avoid redundant generation costs (see Prompt Cache System). 5. LLM generation: the assembled prompt is sent to the LLM, which generates one or more draft outputs. 6. Automated quality checks: drafts are scored for length compliance, keyword inclusion, readability, and factual consistency. 7. Moderation screening: drafts are run through the AI Moderation Pipeline to check for policy violations. 8. Human review gate: high-stakes content (legal, financial, medical) is routed to a human reviewer before publishing. Low-stakes content may auto-publish. 9. Revisions: reviewers can request a regeneration with additional constraints, which restarts the generation step. 10. Publish: the approved content is written to the CMS, database, or downstream publishing system.

Why this matters

An automated content pipeline scales content production while maintaining quality and safety guardrails. The human review gate ensures accountability for high-stakes outputs without blocking the automated path for routine content.