LLM Streaming Response

LLM streaming response is a delivery pattern where the model's output tokens are sent to the client incrementally as they are generated, rather than waiting for the complete response to be assembled — dramatically reducing time-to-first-token and improving perceived responsiveness.

LLM streaming response is a delivery pattern where the model's output tokens are sent to the client incrementally as they are generated, rather than waiting for the complete response to be assembled — dramatically reducing time-to-first-token and improving perceived responsiveness.

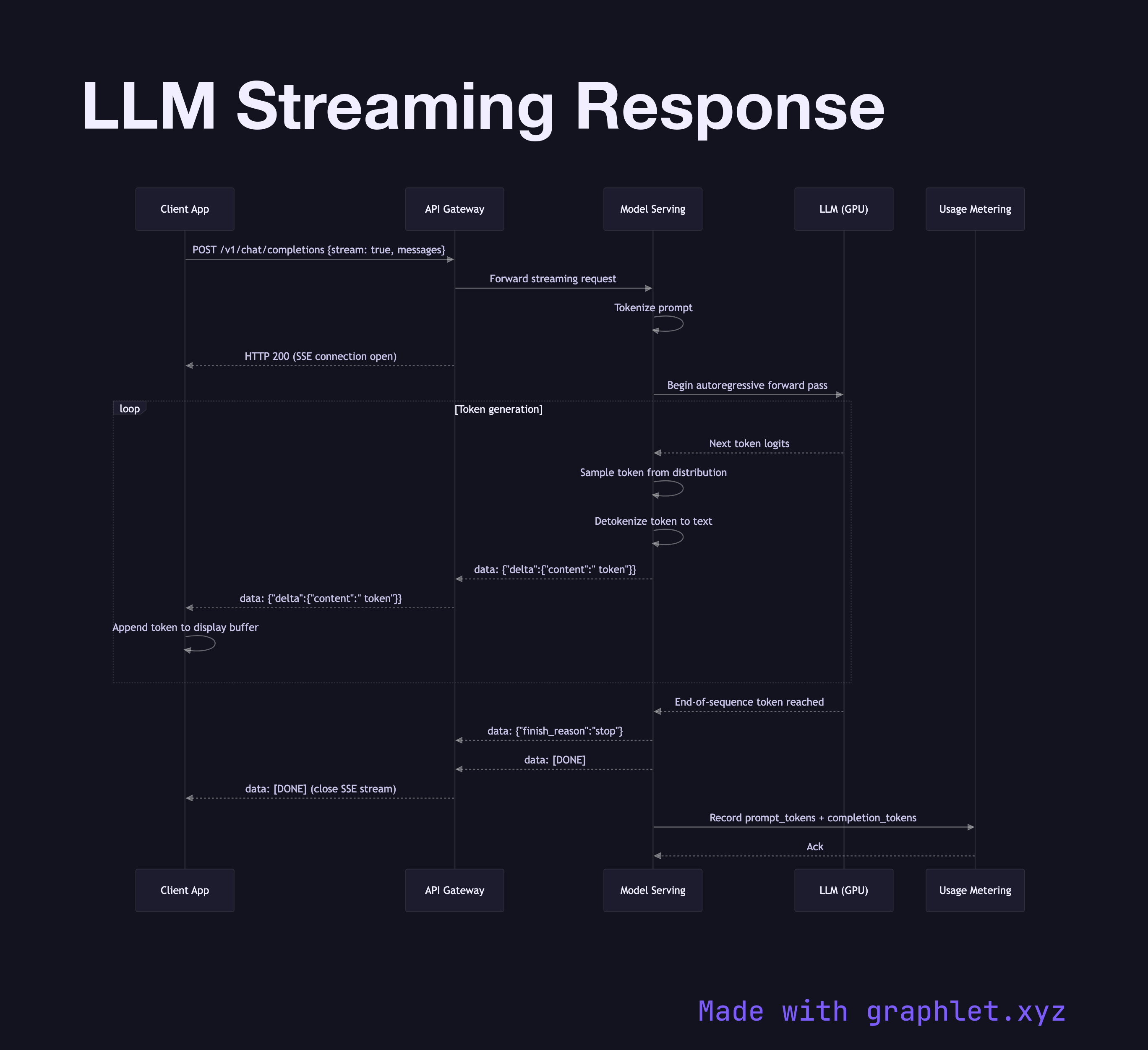

What the diagram shows

This sequence diagram illustrates how server-sent events (SSE) or chunked transfer encoding enables token-level streaming from the model serving layer to the client:

1. Client request: the client sends a completion request with stream: true, signaling it wants incremental delivery. 2. Connection upgrade: the API gateway establishes a persistent HTTP connection using SSE or chunked transfer encoding, keeping the socket open for the duration of generation. 3. Tokenization and inference start: the serving layer tokenizes the prompt and begins the autoregressive forward pass. 4. Token streaming loop: as each output token is sampled from the model's logit distribution, it is immediately detokenized and dispatched as a data: chunk event — typically as {"delta": {"content": " token"}} in OpenAI-compatible APIs. 5. Client renders tokens: the client UI appends each token to the display buffer as it arrives, producing the typewriter effect familiar from chat interfaces. 6. Stream termination: when the model produces an end-of-sequence token or reaches max_tokens, a final data: [DONE] event is sent and the connection is closed. 7. Usage accounting: the full token count (prompt + completion) is reported in the final event or via a separate metering call.

Why this matters

Without streaming, users wait for the entire response before seeing any output — for long answers this can take many seconds. Streaming cuts perceived latency to near-zero and makes AI chat interfaces feel interactive. See LLM Request Flow for the non-streaming variant and AI Chat Application Architecture for the broader application context.